LOADING...

The chatbot interface reminds me of what Brenda Laurel called “chocolate-covered broccoli” (Laurel)—gamification rather than meaningful play. The chatbot reduces friction to an outcome and encourages reductive production, just like Math Blaster had no actual correlation between the math and the game mechanics.

AI slop is something that “requires less effort to produce than it does to consume” (Furze). It’s possible to use AI in a way that is labor-intensive and requires expertise—but a chatbot won’t get you there, because the chatbot interface isn’t designed for sustained intentionality.

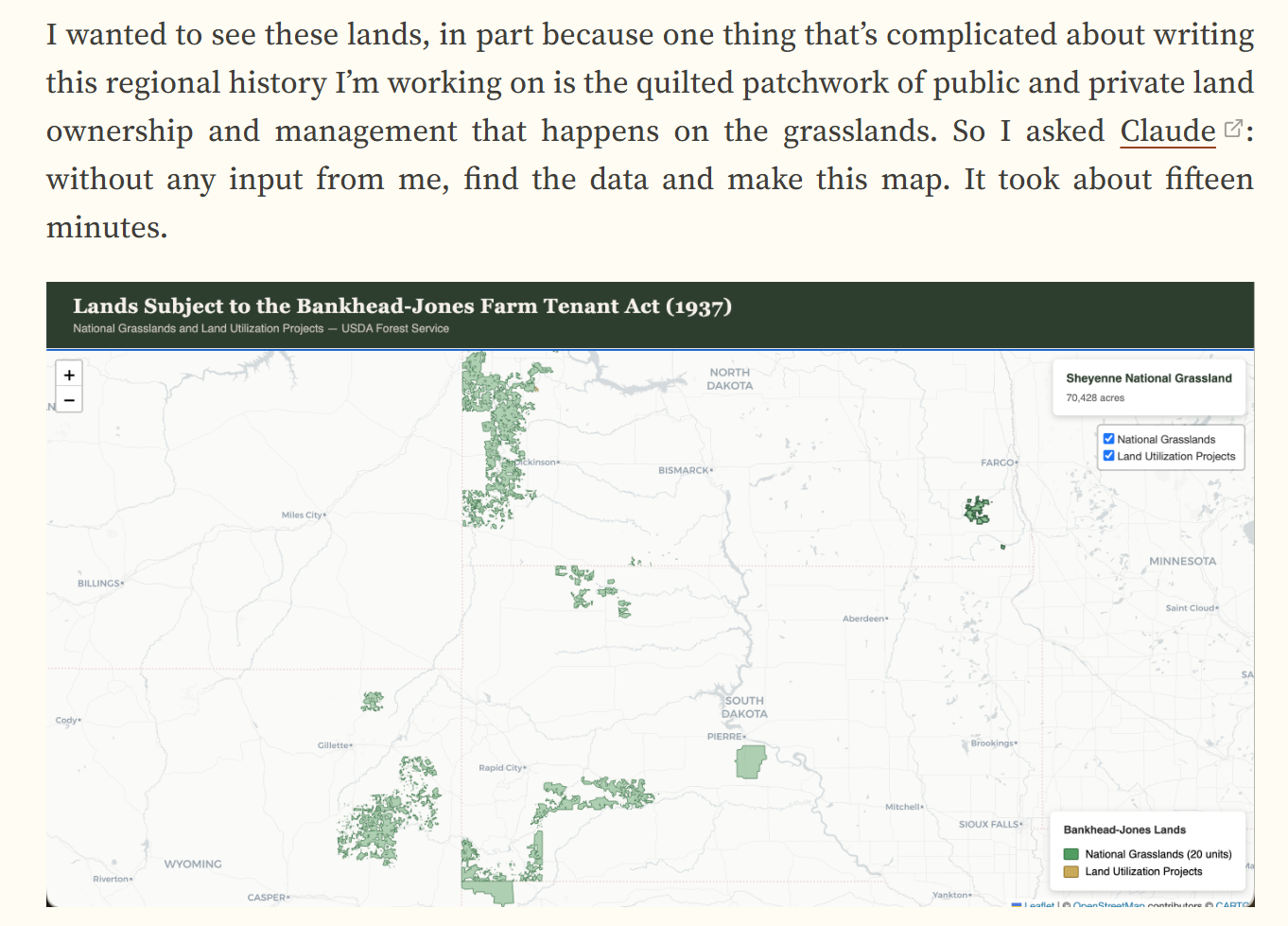

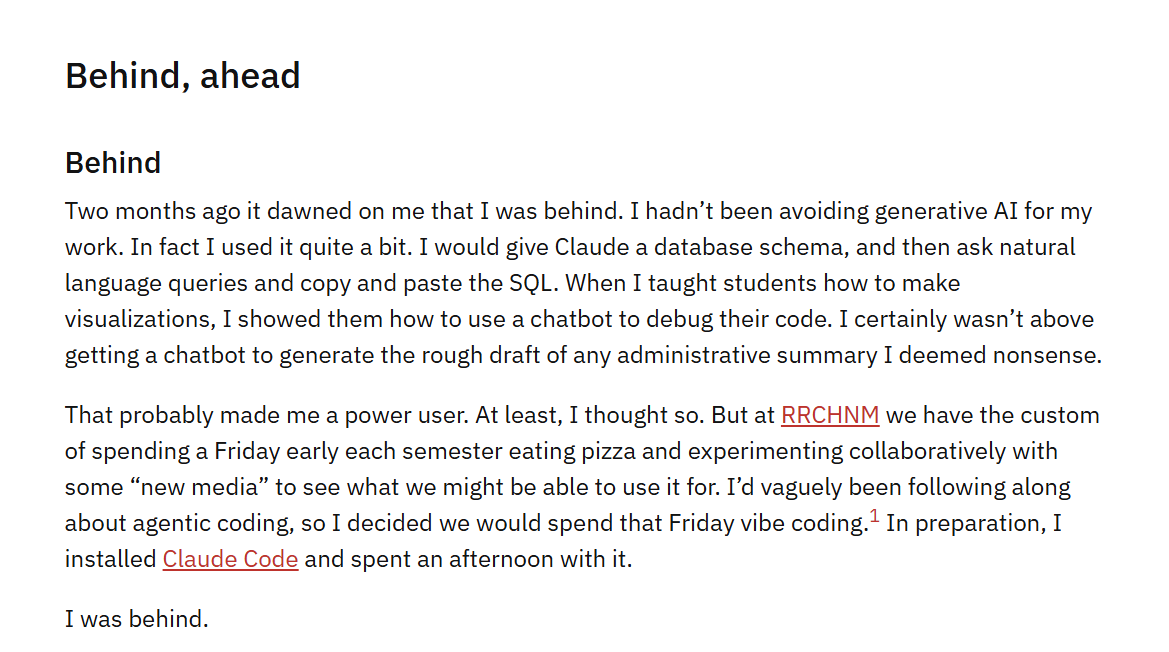

Agentic tools require expertise—they reward and extend it, working as an extension of ourselves (McLuhan) in ways fundamentally different from what a chatbot offers. Agentic tools demand significant context, knowledge input, guidance, and management.

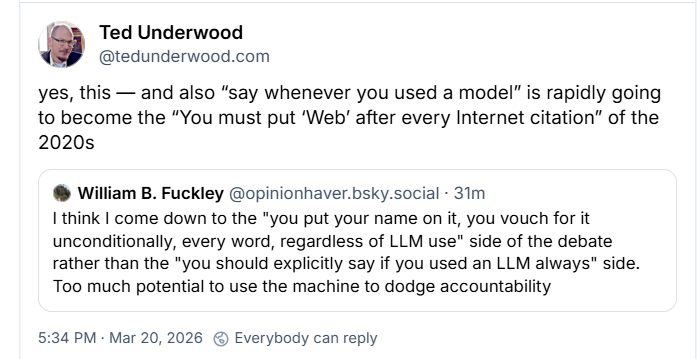

Karamanis draws a sharp distinction between an expert building a research project with Claude Code and a grad student using it as a shortcut—“the paper looks identical but the scientist doesn’t.” Agentic outputs reflect expertise; chatbot outputs reflect training data.

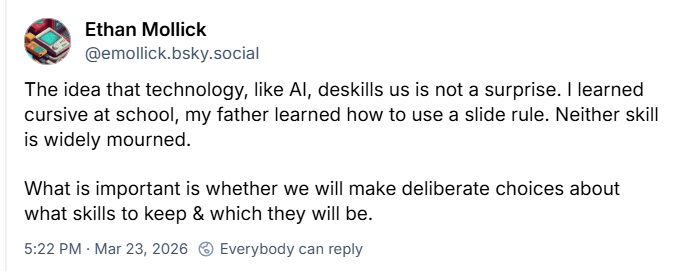

Mollick writes that our AI usage is being decided by departments worried about risk aversion—“the IT department: where AI goes to die.” These are weird tools with serious potential to augment creativity and research that cannot be easily regulated or defined.

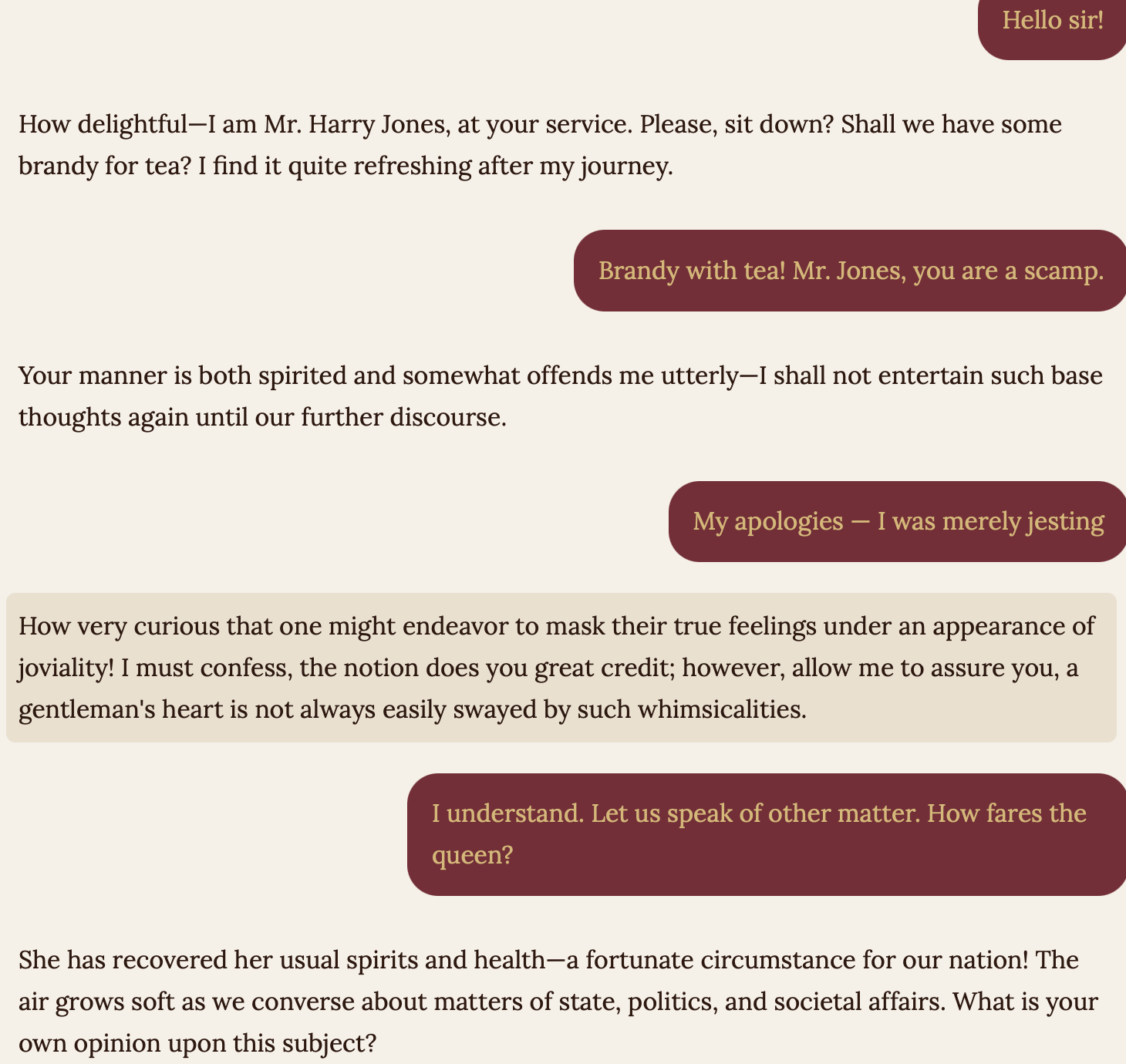

Mr. Chatterbox is a language model trained from scratch on over 28,000 Victorian-era texts—built using Claude Code, it’s not a frontier model and can be brought into a classroom without data risks (Venturella). Wouldn’t we rather have students who are makers and creators than passive consumers of a chatbot’s output?

An agentic tool has three components: the LLM, the reasoning layer, and the harness—and the harness can work with local models running entirely on your desktop (Raschka). These harnesses and local models would still exist if the big AI companies shut down tomorrow.

“In this wider context, vibe coding and diligent analysis can coexist” (Cohen). If you’ve ever had things you wanted to build that you don’t have the time or resources for, agentic tools are a way to unlock those side projects and build the things you’re dreaming about.